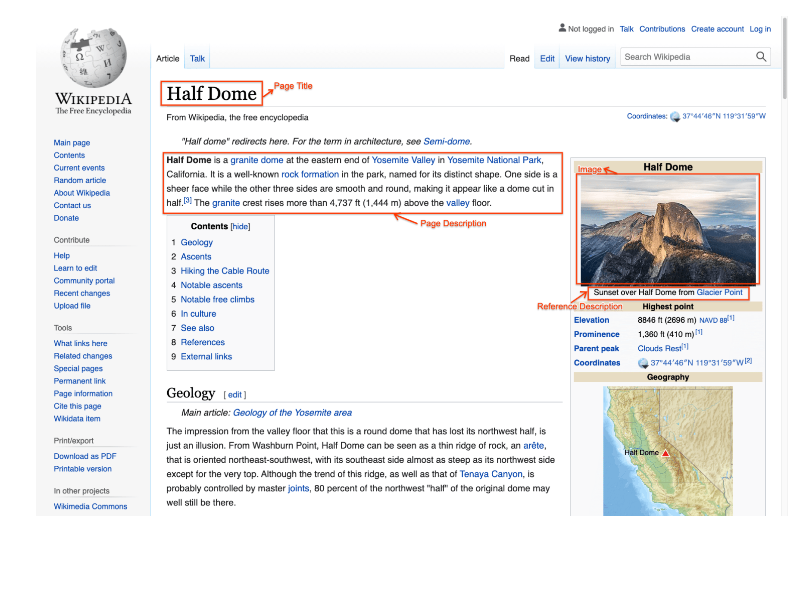

The ground-breaking multimodal, multilingual WIT (Wikipedia-based Image Text) Dataset, developed by Google AI, contains over 37 million image-text pairs in more than 100 languages.

The dataset aims to close the gap in currently available datasets, which mostly concentrate on English and lack the opportunity to use images, a global medium, for improved multilingual textual understanding. Recognising this, Google AI used Wikipedia articles and Wikimedia photos to source and refine content, placing an emphasis on excellent image-text pairings.

With a minimum of 12,000 samples in each of its 108 languages and 53 languages having more than 100,000 pairs, the final product, known as WIT, is the largest multimodal dataset currently available. The collection intends to support study into how visuals and texts interact across many languages.

User objects:

– Researchers

– Machine learning developers

– Multilingual content creators

– Data scientists

– Visual analytics experts

– Language model trainers

– Multimodal AI enthusiasts