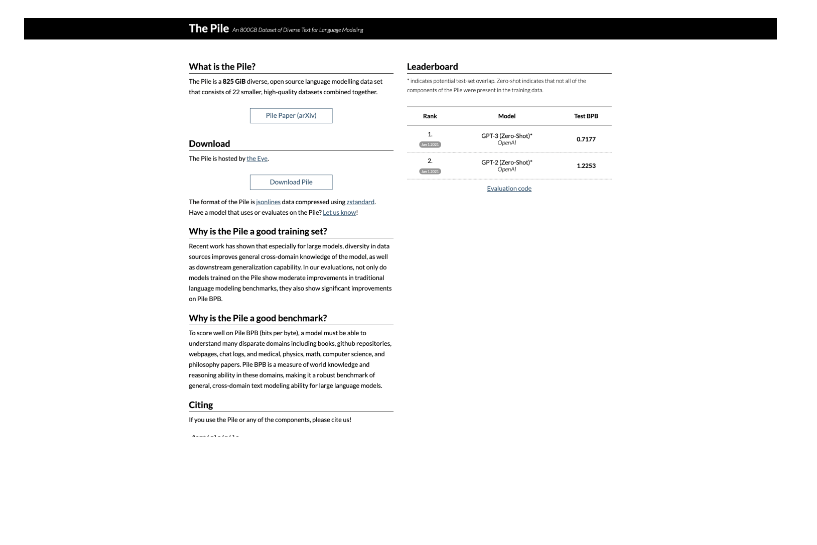

EleutherAI’s “The Pile” is a big 825 GiB open-source language modelling dataset that combines multiple smaller datasets. The variety of text it collects from diverse modalities, intended to improve the generalisation skills of models trained on it, is what makes it stand out.

Recent research supports the significance of such diversity by indicating that varied data sources enrich the cross-domain expertise of large models, enhancing their performance on both general benchmarks for language modelling and more specialised benchmarks like Pile BPB. The pile is essentially a tool for training AI models to learn more and be applied in more areas.

User objects:

– Language model researchers

– Data scientists

– Natural language processing (NLP) developers

– AI educators

– Content recommendation system developers

– Search engine developers

– Linguists studying computational methods

– Text analytics professionals

– Machine learning engineers

– AI application developers.