A novel training method for Large Language Models (LLMs), such as Claude, the ChatGPT substitute from Anthropic, is called “Constitutional AI.” It stands out because it depends less on human input, which increases scalability.

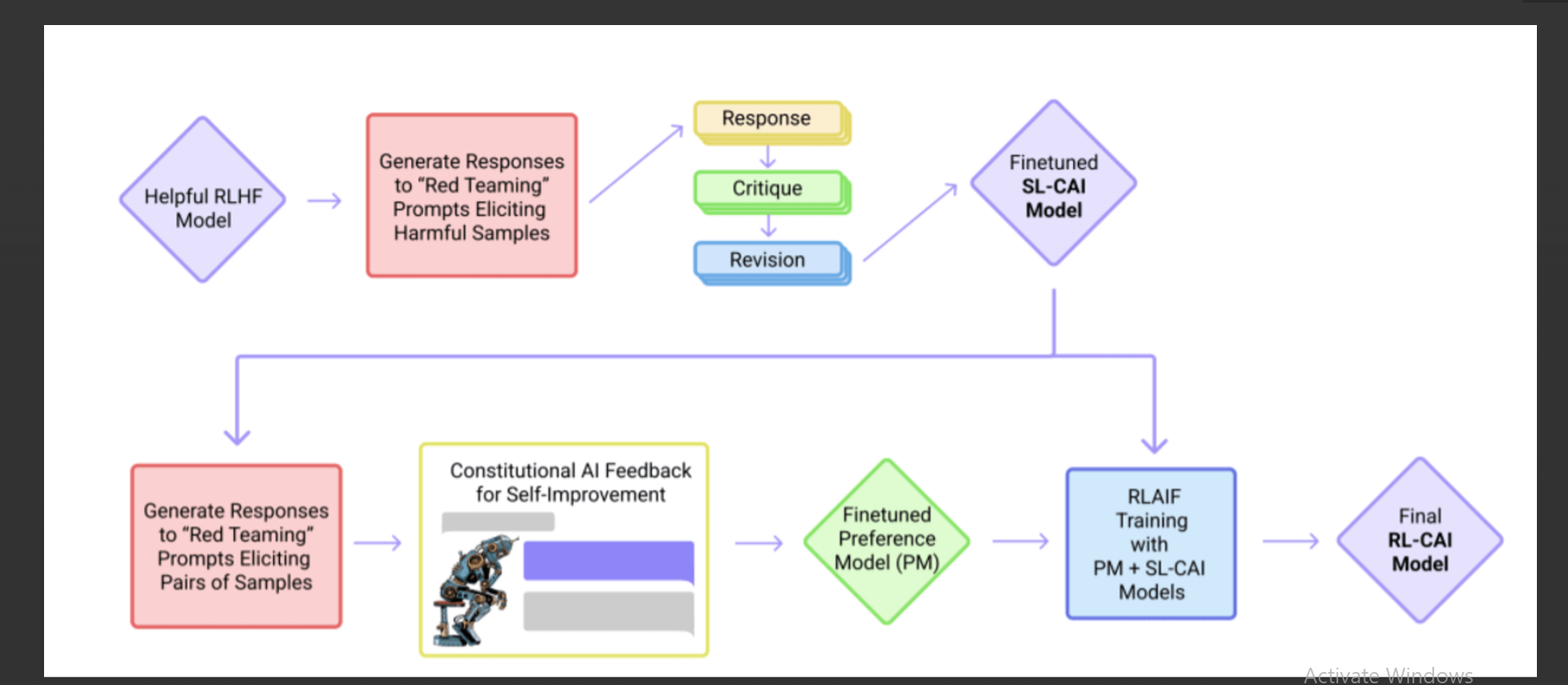

This approach uses supervised fine-tuning (SFT) and reinforcement learning from human feedback (RLHF) in an effort to produce AI responses that follow the fundamental guidelines established by the method’s creators. These guidelines place a strong emphasis on the AI acting in a helpful, trustworthy, safe, and moral manner.

More investigation and scrutiny are needed in the selection of these guiding principles and their presentation to the LLM. Among the guidelines given to the LLM are the need to avoid promoting unethical or illegal behaviour and to ensure that responses are non-toxic and free of prejudice. The objective is to develop a wise, peaceful, and ethical AI assistant while avoiding overly preachy or reactive outputs. This approach demonstrates how training models have developed to become more in line with human values.

Users of “Constitutional AI” would include:

- AI researchers and developers

- Ethicists concerned with AI behavior

- Organizations aiming for value-aligned AI deployments

- Science fiction enthusiasts referencing Asimov’s principles

- Academic institutions for research and study

- Tech companies developing Chatbots or AI assistants

- Regulatory bodies overseeing AI ethics.

>>> Please use: ChatGPT without login for Free

DEMO

Similar Apps

Replicate | Discover AI use cases

PyTorch 2.0

Chatgpt Android

TryChroma

Trudo AI