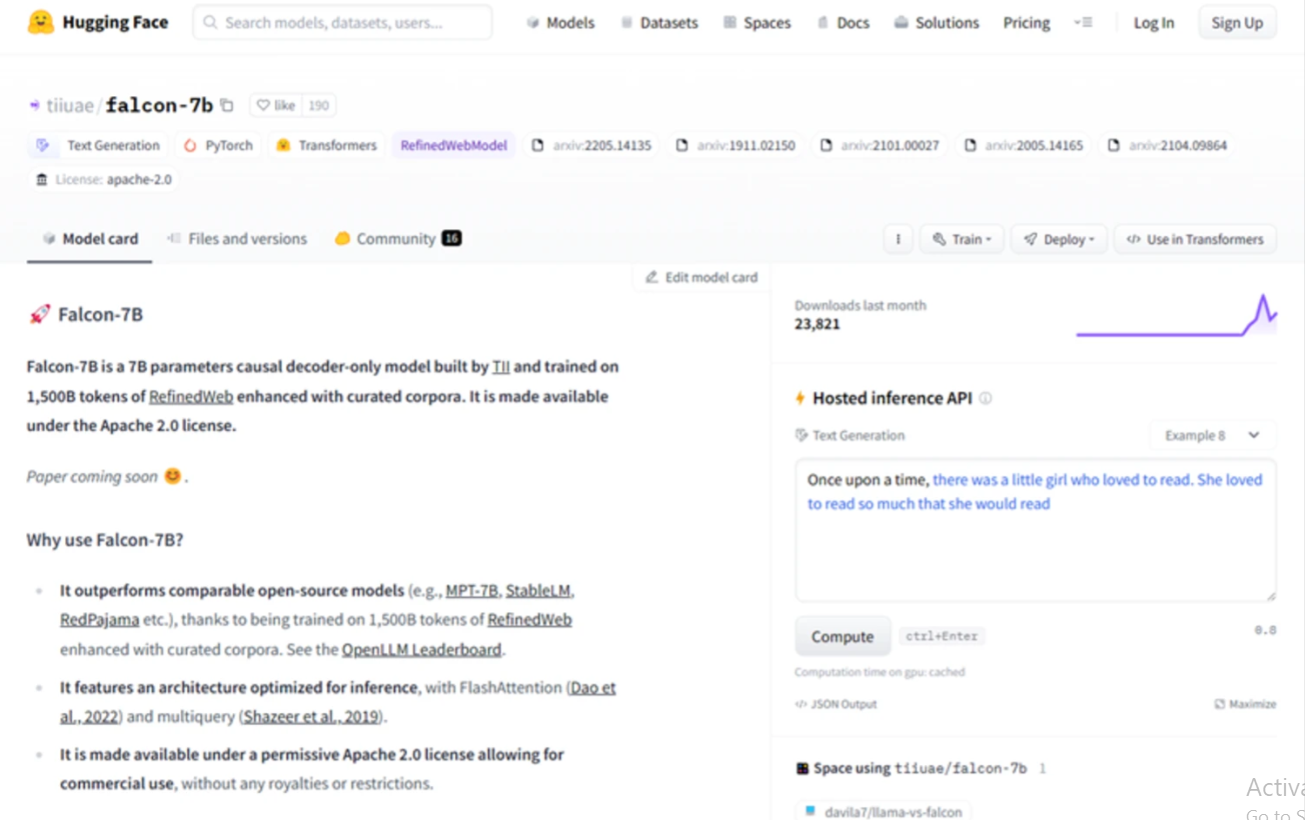

The Abu Dhabi Technology Innovation Institute (TII) introduced Falcon LLM, an open-source language model. The Falcon-40B autoregressive decoder-only model, trained on trillion tokens, has 40 billion parameters and works like GPT. A smaller Falcon-7B model with 7 billion parameters was trained on 1,500 billion tokens. Chat-specific Falcon-40B-Instruct and Falcon-7B-Instruct versions exist. Falcon’s architecture outperforms GPT-3 using only 75% of its training compute and requires less inference power. Falcon uses specialised tools and a data pipeline to filter and deduplicate web content to extract high-quality content.

User objects: Researchers, data scientists, developers, content creators, and businesses seeking natural language processing solutions.

>>> Use ChatGPT Free Online to make your work more convenient

DEMO

Similar Apps

Openai Codex

nanoGPT minGPT

Muse

MT NLG by Microsoft and Nvidia AI

DeepMind RETRO