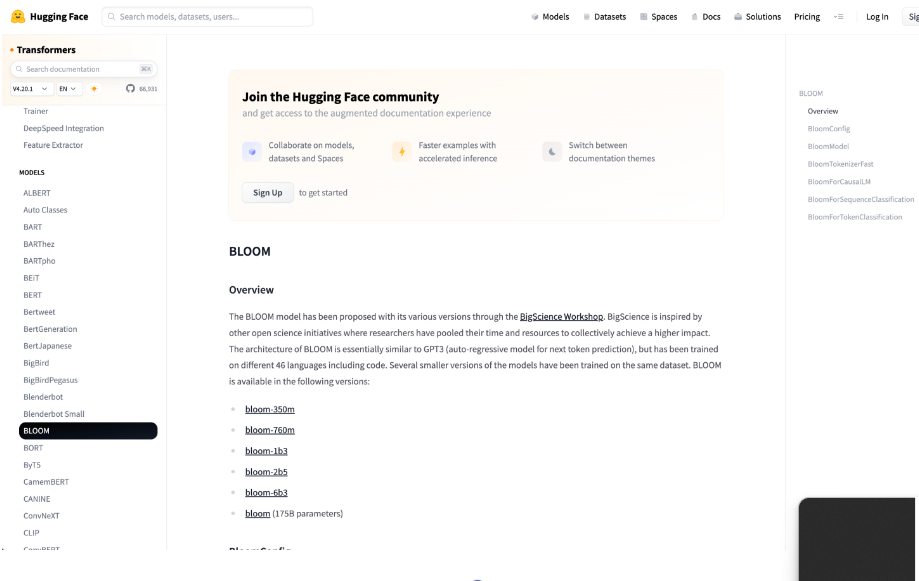

In terms of size, BLOOM outpaces GPT-3 and is a multilingual large language model created by over 1000 AI researchers. It took four months to train, starting on March 11, 2022, and was created through a significant cooperative effort. The model has 176 billion parameters and was trained using 384 graphic cards on France’s Jean Zay supercomputer. BLOOM’s structure consists of 70 layers, with an average of 112 attention heads per layer. The model also supports a variety of languages and even programming languages. The “Big Science” project, which combines knowledge from various research fields, is an open collaboration run by organisations like HuggingFace, GENCI, and IDRIS.

User objects:

– AI researchers

– Linguists

– Developers

– Multilingual content creators

– Data scientists

– Educators

– Students

– Tech enthusiasts

– Companies in NLP and AI sectors

– Policy and ethics experts

>>> Use Chat GPT Demo with OpenAI’s equivalent smart bot experience

DEMO

Similar Apps

Openai Codex

nanoGPT minGPT

Muse

MT NLG by Microsoft and Nvidia AI

DeepMind RETRO