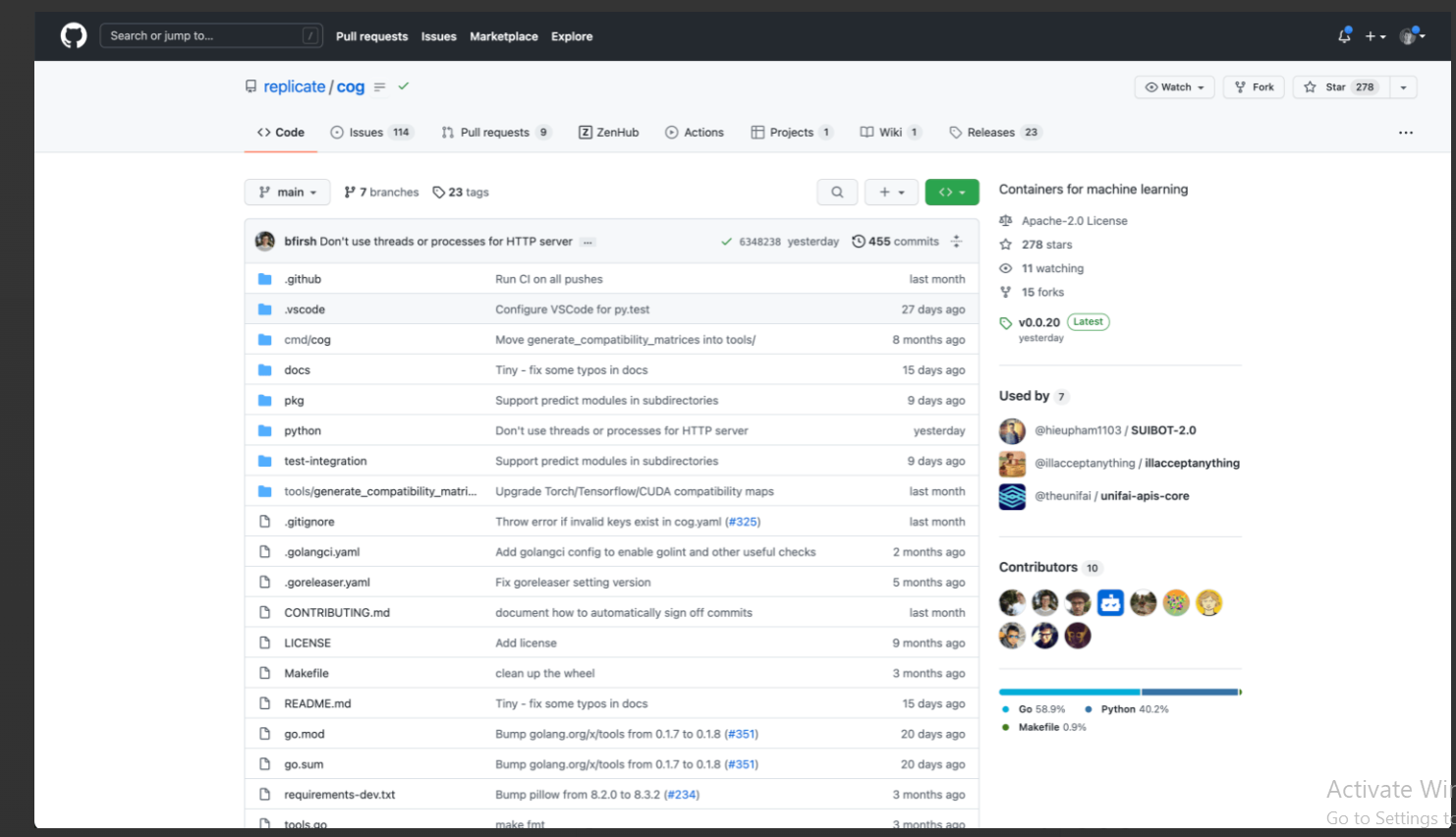

An open-source command-line programme called “COG” is made specifically for putting machine learning (ML) models into Docker containers. Its main benefit is that it offers a consistent environment for model development, from the early stages on laptops for personal use to training on high-performance GPU machines. After the model is complete, users can package it inside a Docker image that provides a standardised HTTP API. Key elements consist of:

Automated Docker image creation using a simple configuration file to do away with the difficulties associated with GPU setups.

Eliminating the need for users to build their own Flask server by automatically creating an HTTP service based on the model’s definition.

Compatibility problems are made simpler because COG finds and aligns the proper versions of CUDA, cuDNN, PyTorch, TensorFlow, and Python. The often difficult process of making sure software compatibility in ML setups is simplified by this feature.

User object:

- Machine Learning Engineers

- Data Scientists

- DevOps Professionals

- System Administrators

- ML Model Deployers

- Research Scientists

- ML Hobbyists

>>> We invite you to use the latest ChatGPT Demo Free in 2024

DEMO

Similar Apps

Replicate | Discover AI use cases

PyTorch 2.0

Chatgpt Android

TryChroma

Trudo AI